Governed write-path infrastructure

for enterprise AI execution.

NSHKR is the substrate where AI proposals become authorized operations: commands enter through typed product boundaries, resolve authority and workflow state, execute lower effects through governed connectors, and return receipts, evidence, projections, reviews, and replayable proof.

Execution Contract

The stack is built around the questions an enterprise has to answer after an AI-mediated action. The important artifact is not a prompt transcript. It is a durable chain from request to authorized operation to external effect to receipt-backed replay.

Actor, tenant, installation, request context.

Capability, policy, scope, review gate.

Operation context, causation, idempotency.

Operation class, manifest, lower instruction.

Effect receipt, status, failure class.

Evidence refs, attached artifacts, proof tokens.

Review case, decision, override, escalation.

AITrace DAG, predecessor refs, merge semantics.

Spatial topology, target host ID, BEAM node ref.

app_kit is the northbound surface product code is allowed

to touch for governed platform behavior. It accepts product-level

commands and stable DTOs, exposes operator reads and review controls,

and keeps products from stitching lower execution paths together by

hand.

mezzanine owns reusable operational truth: binding

registry, compiled run snapshots, workflow handoff, execution ledgers,

operation receipts, evidence, projections, review state, audit, and

operator actions. It records the facts that make the write path

replayable.

outer_brain owns semantic context, recall, normalized AI

outcomes, and semantic failure carriers. citadel

authorizes after resolution, when the operation class, manifest,

side-effect class, required scope, and credential constraints are

known.

jido_integration resolves connector manifests, operation

descriptors, and credential leases into governed lower invocation. It

lets provider mechanics be specific without leaking provider-shaped

control flow into reusable platform surfaces.

execution_plane performs raw mechanics across HTTP, CLI,

process, JSON-RPC, sandbox, terminal, filesystem, and future execution

lanes. It emits receipts and raw facts. Product meaning, review state,

and operational projections stay above it.

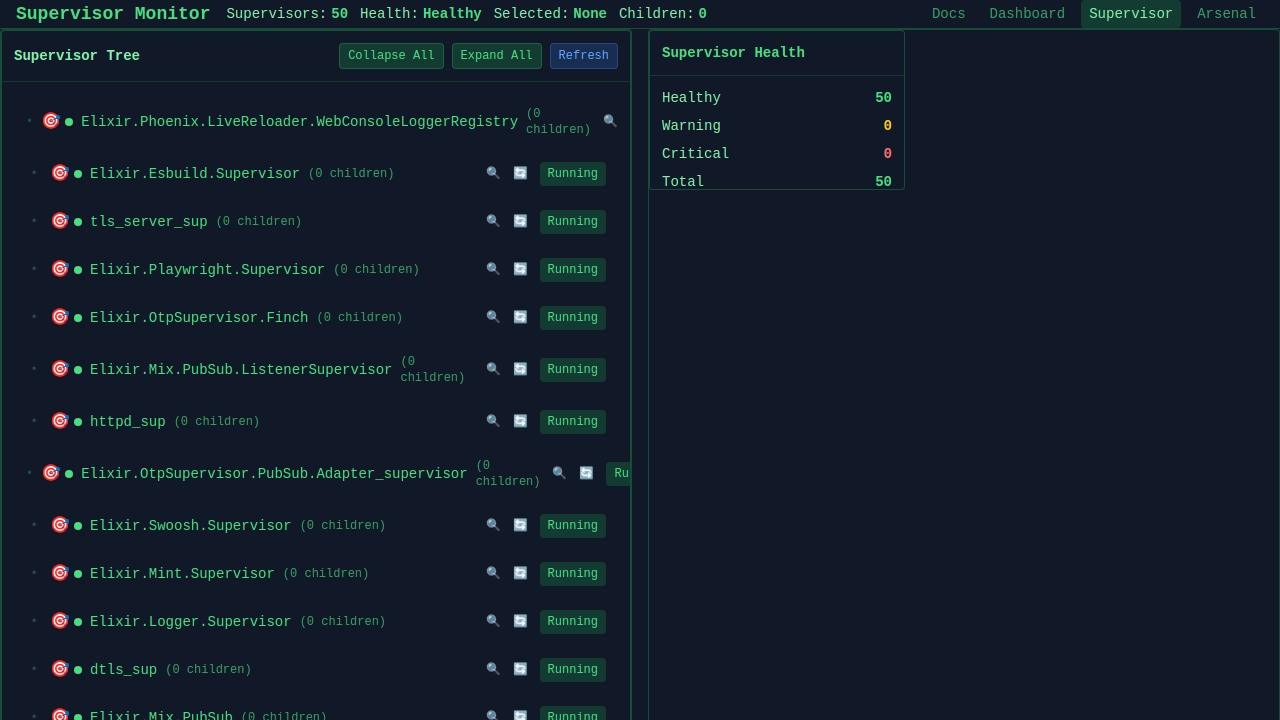

chassis is the self-replicating spatial substrate that maps virtual stack components to physical BEAM nodes. It coordinates host inventory, provisions remote nodes via native Elixir SSH bootstrap or pluggable adapters, and injects memory-decrypted age/SOPS credentials directly into Systemd service environments.

AITrace records causal execution events with predecessor

references so replay is not just emission order. stack_lab

adds scanners, acceptance gates, negative controls, failure drills,

and second-product validation so generality is tested instead of

assumed.

Authority follows resolution

A broad product request is not enough. Citadel authorizes the resolved operation plan after Mezzanine knows the operation class, manifest ref, binding ref, side-effect class, credential scope, and review constraints.

Provider specificity is data

A pack can bind issue tracking to Linear, code hosting to GitHub, or runtime work to Codex. Reusable surfaces still speak product roles, manifests, operation classes, receipts, and projections.

Proof is executable

stack_lab scenarios, AITrace DAGs, schema registries, release manifests, no-bypass scans, projection hashes, and proof tokens are not documentation after the fact. They are acceptance gates.

Repository Atlas

Generated from live repository metadata across the NSHKR and North Shore AI ecosystem.

synapse

Headless, declarative multi-agent orchestration …

★ 45

synapse

Headless, declarative multi-agent orchestration …

★ 45

flowstone

Asset-first data orchestration for Elixir/BEAM. …

★ 27

flowstone

Asset-first data orchestration for Elixir/BEAM. …

★ 27

DSPex

Declarative Self Improving Elixir - DSPy Orchestration …

★ 19

ds_ex

DSPEx - Declarative Self-improving Elixir | A …

★ 17

DSPex

Declarative Self Improving Elixir - DSPy Orchestration …

★ 19

ds_ex

DSPEx - Declarative Self-improving Elixir | A …

★ 17

ALTAR

The Agent & Tool Arbitration Protocol

★ 8

mabeam

Multi-agent systems framework for the BEAM platform - …

★ 8

ALTAR

The Agent & Tool Arbitration Protocol

★ 8

mabeam

Multi-agent systems framework for the BEAM platform - …

★ 8

pipeline_ex

Claude Code + Gemini AI collaboration orchestration …

★ 8

pipeline_ex

Claude Code + Gemini AI collaboration orchestration …

★ 8

jido_hive

Phoenix coordination server and embeddable Elixir …

★ 2

jido_hive

Phoenix coordination server and embeddable Elixir …

★ 2

flowstone_ai

FlowStone integration for altar_ai - AI-powered data …

★ 1

flowstone_ai

FlowStone integration for altar_ai - AI-powered data …

★ 1

synapse_ai

Synapse integration for altar_ai - SDK-backed LLM …

★ 1

synapse_ai

Synapse integration for altar_ai - SDK-backed LLM …

★ 1

extravaganza

First proving-ground product app for the nshkr stack: a …

extravaganza

First proving-ground product app for the nshkr stack: a …

mezzanine

Neutral high-level reusable monorepo for the nshkr …

mezzanine

Neutral high-level reusable monorepo for the nshkr …

stack_coder

An advanced Elixir-based AI coding agent focused on …

stack_coder

An advanced Elixir-based AI coding agent focused on … gemini_ex

Elixir Interface / Adapter for Google Gemini LLM, for …

★ 31

gemini_ex

Elixir Interface / Adapter for Google Gemini LLM, for …

★ 31

claude_agent_sdk

An Elixir SDK for Claude Code - provides programmatic …

★ 30

claude_agent_sdk

An Elixir SDK for Claude Code - provides programmatic …

★ 30

codex_sdk

OpenAI Codex SDK written in Elixir

★ 22

codex_sdk

OpenAI Codex SDK written in Elixir

★ 22

agent_session_manager

Agent Session Manager - A comprehensive Elixir library …

★ 8

agent_session_manager

Agent Session Manager - A comprehensive Elixir library …

★ 8

ollixir

Ollixir provides a first-class Elixir client with …

★ 5

ollixir

Ollixir provides a first-class Elixir client with …

★ 5

altar_ai

Protocol-based AI adapter foundation for Elixir - …

★ 4

altar_ai

Protocol-based AI adapter foundation for Elixir - …

★ 4

jules_ex

Elixir client SDK for the Jules API - orchestrate AI …

★ 2

jules_ex

Elixir client SDK for the Jules API - orchestrate AI …

★ 2

notion_sdk

Native Elixir SDK for the Notion API — comprehensive, …

★ 2

notion_sdk

Native Elixir SDK for the Notion API — comprehensive, …

★ 2

amp_sdk

Elixir SDK for the Amp CLI — provides a comprehensive …

★ 1

amp_sdk

Elixir SDK for the Amp CLI — provides a comprehensive …

★ 1

github_ex

Native Elixir SDK for the GitHub REST API — …

★ 1

github_ex

Native Elixir SDK for the GitHub REST API — …

★ 1

mcp_client

Full-featured Elixir client for the Model Context …

★ 1

mcp_client

Full-featured Elixir client for the Model Context …

★ 1

vllm

vLLM - High-throughput, memory-efficient LLM inference …

★ 1

vllm

vLLM - High-throughput, memory-efficient LLM inference …

★ 1

antigravity_cli_sdk

Elixir SDK for the Google Antigravity CLI (agy) — …

antigravity_cli_sdk

Elixir SDK for the Google Antigravity CLI (agy) — …

cli_subprocess_core

Foundational Elixir runtime library for deterministic …

crucible_prompts

Prompt and parsing utilities for Crucible and NSAI. …

cli_subprocess_core

Foundational Elixir runtime library for deterministic …

crucible_prompts

Prompt and parsing utilities for Crucible and NSAI. …

cursor_cli_sdk

Elixir SDK for the Cursor Agent CLI (agent) — …

cursor_cli_sdk

Elixir SDK for the Cursor Agent CLI (agent) — …

external_runtime_transport

An Elixir-first external runtime transport foundation …

external_runtime_transport

An Elixir-first external runtime transport foundation …

gemini_cli_sdk

An Elixir SDK for the Gemini CLI — Build AI-powered …

gemini_cli_sdk

An Elixir SDK for the Gemini CLI — Build AI-powered …

linear_sdk

Elixir SDK for Linear built on Prismatic, using a …

linear_sdk

Elixir SDK for Linear built on Prismatic, using a …

llama_cpp_sdk

Barebones Elixir wrapper and integration surface for …

nsai_llm

Shared LLM Actions for NSAI runtimes. Wraps …

llama_cpp_sdk

Barebones Elixir wrapper and integration surface for …

nsai_llm

Shared LLM Actions for NSAI runtimes. Wraps …

self_hosted_inference_core

Core Elixir primitives for building reliable …

self_hosted_inference_core

Core Elixir primitives for building reliable … json_remedy

A practical, multi-layered JSON repair library for …

★ 32

json_remedy

A practical, multi-layered JSON repair library for …

★ 32

snakepit

High-performance, generalized process pooler and …

★ 11

snakepit

High-performance, generalized process pooler and …

★ 11

rag_ex

Elixir RAG library with multi-LLM routing (Gemini, …

★ 9

rag_ex

Elixir RAG library with multi-LLM routing (Gemini, …

★ 9

snakebridge

Compile-time Elixir code generator for Python library …

★ 8

snakebridge

Compile-time Elixir code generator for Python library …

★ 8

gepa_ex

Elixir implementation of GEPA: LLM-driven evolutionary …

★ 5

gepa_ex

Elixir implementation of GEPA: LLM-driven evolutionary …

★ 5

tinkex_cookbook

Elixir port of tinker-cookbook: training and evaluation …

★ 3

tinkex_cookbook

Elixir port of tinker-cookbook: training and evaluation …

★ 3

nsai_gateway

Unified API gateway for the NSAI …

★ 2

nsai_gateway

Unified API gateway for the NSAI …

★ 2

portfolio_core

Hexagonal architecture core for Elixir RAG systems. …

★ 2

portfolio_core

Hexagonal architecture core for Elixir RAG systems. …

★ 2

slither

Lightweight Elixir runtime for composing and executing …

★ 2

slither

Lightweight Elixir runtime for composing and executing …

★ 2

tinkex

Elixir SDK for the Tinker ML platform—LoRA training, …

★ 2

tinkex

Elixir SDK for the Tinker ML platform—LoRA training, …

★ 2

citadel

The command and control layer for the AI-powered …

★ 1

citadel

The command and control layer for the AI-powered …

★ 1

command

Core Elixir library for AI agent orchestration - …

★ 1

command

Core Elixir library for AI agent orchestration - …

★ 1

execution_plane

Execution Plane is an Elixir/OTP runtime substrate for …

★ 1

execution_plane

Execution Plane is an Elixir/OTP runtime substrate for …

★ 1

nsai_registry

Service discovery and registry for the NSAI …

★ 1

nsai_registry

Service discovery and registry for the NSAI …

★ 1

nsai_work

NSAI.Work - Unified job scheduler for North-Shore-AI …

★ 1

nsai_work

NSAI.Work - Unified job scheduler for North-Shore-AI …

★ 1

pilot

Interactive CLI and REPL for the NSAI ecosystem—unified …

★ 1

pilot

Interactive CLI and REPL for the NSAI ecosystem—unified …

★ 1

skill_ex

Claude Skill Aggregator

★ 1

skill_ex

Claude Skill Aggregator

★ 1

tiktoken_ex

Pure Elixir TikToken-style byte-level BPE tokenizer …

★ 1

tiktoken_ex

Pure Elixir TikToken-style byte-level BPE tokenizer …

★ 1

app_kit

Shared app-facing surface monorepo for the nshkr …

app_kit

Shared app-facing surface monorepo for the nshkr …

chassis

Spatial & deployment plane for NSHKR: standalone …

chassis

Spatial & deployment plane for NSHKR: standalone …

gepa_buildout

Deterministic GEPA buildout examples and domain task …

gepa_buildout

Deterministic GEPA buildout examples and domain task …

gepa_framework

Reusable GEPA optimizer framework for typed candidate …

gepa_framework

Reusable GEPA optimizer framework for typed candidate …

ground_plane

Shared lower infrastructure monorepo for the nshkr …

ground_plane

Shared lower infrastructure monorepo for the nshkr …

hf_hub_ex

Elixir client for HuggingFace Hub—dataset/model …

hf_hub_ex

Elixir client for HuggingFace Hub—dataset/model …

hf_peft_ex

Elixir port of HuggingFace's PEFT (Parameter-Efficient …

hf_peft_ex

Elixir port of HuggingFace's PEFT (Parameter-Efficient …

inference

Reusable Elixir semantic inference contracts, adapters, …

inference

Reusable Elixir semantic inference contracts, adapters, …

outer_brain

Semantic runtime above Citadel for raw language intake, …

outer_brain

Semantic runtime above Citadel for raw language intake, …

portfolio_index

Production adapters and pipelines for PortfolioCore. …

portfolio_index

Production adapters and pipelines for PortfolioCore. …

portfolio_manager

AI-native personal project intelligence system - …

portfolio_manager

AI-native personal project intelligence system - …

stack_lab

Local distributed-development harness and proving …

stack_lab

Local distributed-development harness and proving …

tinkerer

Chiral Narrative Synthesis workspace for Thinker/Tinker …

tinkerer

Chiral Narrative Synthesis workspace for Thinker/Tinker …

trinity_framework

Reusable TRINITY router and coordination framework for …

trinity_framework

Reusable TRINITY router and coordination framework for … dexterity

Code Intelligence: Token-budgeted codebase context for …

★ 4

dexterity

Code Intelligence: Token-budgeted codebase context for …

★ 4

elixir_dashboard

A Phoenix LiveView performance monitoring dashboard for …

★ 3

elixir_dashboard

A Phoenix LiveView performance monitoring dashboard for …

★ 3

blitz

Small parallel command runner for Elixir and Mix …

★ 1

blitz

Small parallel command runner for Elixir and Mix …

★ 1

elixir_tracer

Local-first observability for Elixir with New Relic API …

★ 1

elixir_tracer

Local-first observability for Elixir with New Relic API …

★ 1

pristine

Shared runtime substrate and build-time bridge for …

★ 1

pristine

Shared runtime substrate and build-time bridge for …

★ 1

prompt_runner_sdk

Prompt Runner SDK - Elixir toolkit for orchestrating …

★ 1

prompt_runner_sdk

Prompt Runner SDK - Elixir toolkit for orchestrating …

★ 1

weld

Deterministic Hex package projection for Elixir …

★ 1

weld

Deterministic Hex package projection for Elixir …

★ 1

atlas_once

Atlas Once is a filesystem-first personal memory system …

atlas_once

Atlas Once is a filesystem-first personal memory system …

coolify_ex

Generic Elixir tooling for triggering, monitoring, and …

coolify_ex

Generic Elixir tooling for triggering, monitoring, and …

portfolio_coder

Code Intelligence Platform: Repository analysis, …

portfolio_coder

Code Intelligence Platform: Repository analysis, …

prismatic

GraphQL-native Elixir SDK platform and monorepo for …

prismatic

GraphQL-native Elixir SDK platform and monorepo for … superlearner

OTP Supervisor Educational Platform

★ 8

apex

Core Apex framework for OTP supervision and monitoring

★ 4

apex_ui

Web UI for Apex OTP supervision and monitoring tools

★ 4

superlearner

OTP Supervisor Educational Platform

★ 8

apex

Core Apex framework for OTP supervision and monitoring

★ 4

apex_ui

Web UI for Apex OTP supervision and monitoring tools

★ 4

arsenal

Metaprogramming framework for automatic REST API …

★ 4

arsenal_plug

Phoenix/Plug adapter for Apex Arsenal framework

★ 3

arsenal

Metaprogramming framework for automatic REST API …

★ 4

arsenal_plug

Phoenix/Plug adapter for Apex Arsenal framework

★ 3 duckdb_ex

DuckDB driver client in Elixir

★ 2

duckdb_ex

DuckDB driver client in Elixir

★ 2

ex_topology

Pure Elixir library for graph topology, TDA, and …

★ 2

ex_topology

Pure Elixir library for graph topology, TDA, and …

★ 2

weaviate_ex

Modern Elixir client for Weaviate vector database with …

★ 2

weaviate_ex

Modern Elixir client for Weaviate vector database with …

★ 2

embed_ex

Vector embeddings service for Elixir—multi-provider …

★ 1

embed_ex

Vector embeddings service for Elixir—multi-provider …

★ 1

nx_penalties

Composable regularization penalties for Elixir Nx. …

nx_penalties

Composable regularization penalties for Elixir Nx. … trinity_coordinator

TRINITY in Elixir (An Evolved LLM Coordinator): route …

★ 5

ChronoLedger

Hardware-Secured Temporal Blockchain

★ 2

trinity_coordinator

TRINITY in Elixir (An Evolved LLM Coordinator): route …

★ 5

ChronoLedger

Hardware-Secured Temporal Blockchain

★ 2

cns

Chiral Narrative Synthesis - Dialectical reasoning …

★ 1

EADS

Evolutionary Autonomous Development System

cns

Chiral Narrative Synthesis - Dialectical reasoning …

★ 1

EADS

Evolutionary Autonomous Development System

anti_agents

Anti Agents - Inspired by Sakana AI's String Seed of …

anti_agents

Anti Agents - Inspired by Sakana AI's String Seed of …

cns_ui

Phoenix LiveView interface for CNS dialectical …

cns_ui

Phoenix LiveView interface for CNS dialectical …

ml_musings

Foundations: A premium, hands-on educational curriculum …

ml_musings

Foundations: A premium, hands-on educational curriculum … LlmGuard

AI Firewall and guardrails for LLM-based Elixir …

★ 9

LlmGuard

AI Firewall and guardrails for LLM-based Elixir …

★ 9

ExFairness

Fairness and bias detection library for Elixir AI/ML …

★ 1

crucible_examples

Interactive Phoenix LiveView demonstrations of the …

★ 1

ExFairness

Fairness and bias detection library for Elixir AI/ML …

★ 1

crucible_examples

Interactive Phoenix LiveView demonstrations of the …

★ 1

crucible_harness

Experimental research framework for running AI …

★ 1

crucible_harness

Experimental research framework for running AI …

★ 1

crucible_xai

Explainable AI (XAI) tools for the Crucible framework

★ 1

crucible_xai

Explainable AI (XAI) tools for the Crucible framework

★ 1

ExDataCheck

Data validation and quality library for ML pipelines in …

cns_crucible

ExDataCheck

Data validation and quality library for ML pipelines in …

cns_crucible

crucible_adversary

Adversarial testing and robustness evaluation for the …

crucible_adversary

Adversarial testing and robustness evaluation for the …

crucible_bench

Statistical testing and analysis framework for AI …

crucible_bench

Statistical testing and analysis framework for AI …

crucible_bumblebee

Bumblebee, Axon, and Nx adapter layer for compiling …

crucible_bumblebee

Bumblebee, Axon, and Nx adapter layer for compiling …

crucible_datasets

Dataset management and caching for AI research …

crucible_datasets

Dataset management and caching for AI research …

crucible_deployment

ML model deployment for the Crucible ecosystem. vLLM …

crucible_deployment

ML model deployment for the Crucible ecosystem. vLLM …

crucible_ensemble

Multi-model ensemble voting strategies for LLM …

crucible_ensemble

Multi-model ensemble voting strategies for LLM …

crucible_factorization

Nx SVD/SVF factorization primitives for neural network …

crucible_factorization

Nx SVD/SVF factorization primitives for neural network …

crucible_feedback

ML feedback loop management for the Crucible ecosystem. …

crucible_feedback

ML feedback loop management for the Crucible ecosystem. …

crucible_framework

CrucibleFramework: A scientific platform for LLM …

crucible_framework

CrucibleFramework: A scientific platform for LLM …

crucible_hedging

Request hedging for tail latency reduction in …

crucible_hedging

Request hedging for tail latency reduction in …

crucible_ir

Intermediate Representation for the Crucible ML …

crucible_ir

Intermediate Representation for the Crucible ML …

crucible_kitchen

Industrial ML training orchestration - backend-agnostic …

crucible_kitchen

Industrial ML training orchestration - backend-agnostic …

crucible_model_registry

ML model registry for the Crucible ecosystem. Artifact …

crucible_model_registry

ML model registry for the Crucible ecosystem. Artifact …

crucible_policy

Routing, gating, fusion, uncertainty, verifier, shared …

crucible_policy

Routing, gating, fusion, uncertainty, verifier, shared …

crucible_safetensors

SafeTensors parsing, validation, bounded slicing, …

crucible_safetensors

SafeTensors parsing, validation, bounded slicing, …

crucible_signal

Canonical Elixir signal ontology for transformer …

crucible_signal

Canonical Elixir signal ontology for transformer …

crucible_signal_trace

Bounded forward-pass trace schema and persistence …

crucible_signal_trace

Bounded forward-pass trace schema and persistence …

crucible_tap

Tap-plan and probe-contract library for selecting, …

crucible_tap

Tap-plan and probe-contract library for selecting, …

crucible_telemetry

Advanced telemetry collection and analysis for AI …

crucible_telemetry

Advanced telemetry collection and analysis for AI …

crucible_tensor_patch

Deterministic tensor patch plans, patch application, …

crucible_tensor_patch

Deterministic tensor patch plans, patch application, …

crucible_trace

Structured causal reasoning chain logging for LLM …

crucible_trace

Structured causal reasoning chain logging for LLM …

crucible_train

ML training orchestration for the Crucible ecosystem. …

crucible_train

ML training orchestration for the Crucible ecosystem. …

crucible_ui

Phoenix LiveView dashboard for the Crucible ML …

crucible_ui

Phoenix LiveView dashboard for the Crucible ML …

datasets_ex

Dataset management library for ML experiments—loaders …

datasets_ex

Dataset management library for ML experiments—loaders …

eval_ex

Model evaluation harness for standardized …

eval_ex

Model evaluation harness for standardized …

hf_datasets_ex

HuggingFace Datasets for Elixir - A native Elixir port …

hf_datasets_ex

HuggingFace Datasets for Elixir - A native Elixir port …

metrics_ex

Metrics aggregation and alerting for ML …

training_ir

Training IR for reproducible ML jobs across Crucible …

metrics_ex

Metrics aggregation and alerting for ML …

training_ir

Training IR for reproducible ML jobs across Crucible …